Last year, a mid-size European property insurer discovered something uncomfortable during a routine board review. They had spent €40 million building a data lake over three years. It ingested 2.3 billion records from claims, underwriting, distribution, and third-party feeds. The infrastructure was modern. The governance was textbook.

And yet, when the chief underwriting officer asked a deceptively simple question, "Which of our commercial clients are likely to file a large claim in the next 90 days?", the room went silent.

The data existed. The answer didn't.

This is not an infrastructure problem. It's a strategic one, and it's more common than most executives care to admit. It's the defining gap between insurers who store data and insurers who actually activate it.

The Storage Trap

Most insurers have convinced themselves that data maturity is a function of how much they collect and how cleanly they warehouse it. That's a bit like measuring a library's value by counting the books on the shelves, while ignoring whether anyone's reading them.

The pattern plays out the same way almost everywhere. Phase one: consolidate legacy systems into a centralized platform. Phase two: hire a chief data officer, build dashboards, run a few pilot models. Phase three: stall. The dashboards look polished. The pilots never quite make it to production. The CDO quietly starts updating their LinkedIn.

What actually went wrong? It's rarely technical debt. It's strategic ambiguity. Nobody sat down and agreed on what the data was for. And without that clarity, even well-funded data programs drift into becoming cost centers.

Four Stages of Data Activation

Insurers that manage to break out of this trap tend to follow a distinct maturity arc. The real progression is in how tightly they connect data to decisions. Most of the industry, if we're being candid, is stuck somewhere between stage one and stage two.

So the real question becomes - how do you move beyond this and progress toward true data-driven execution?

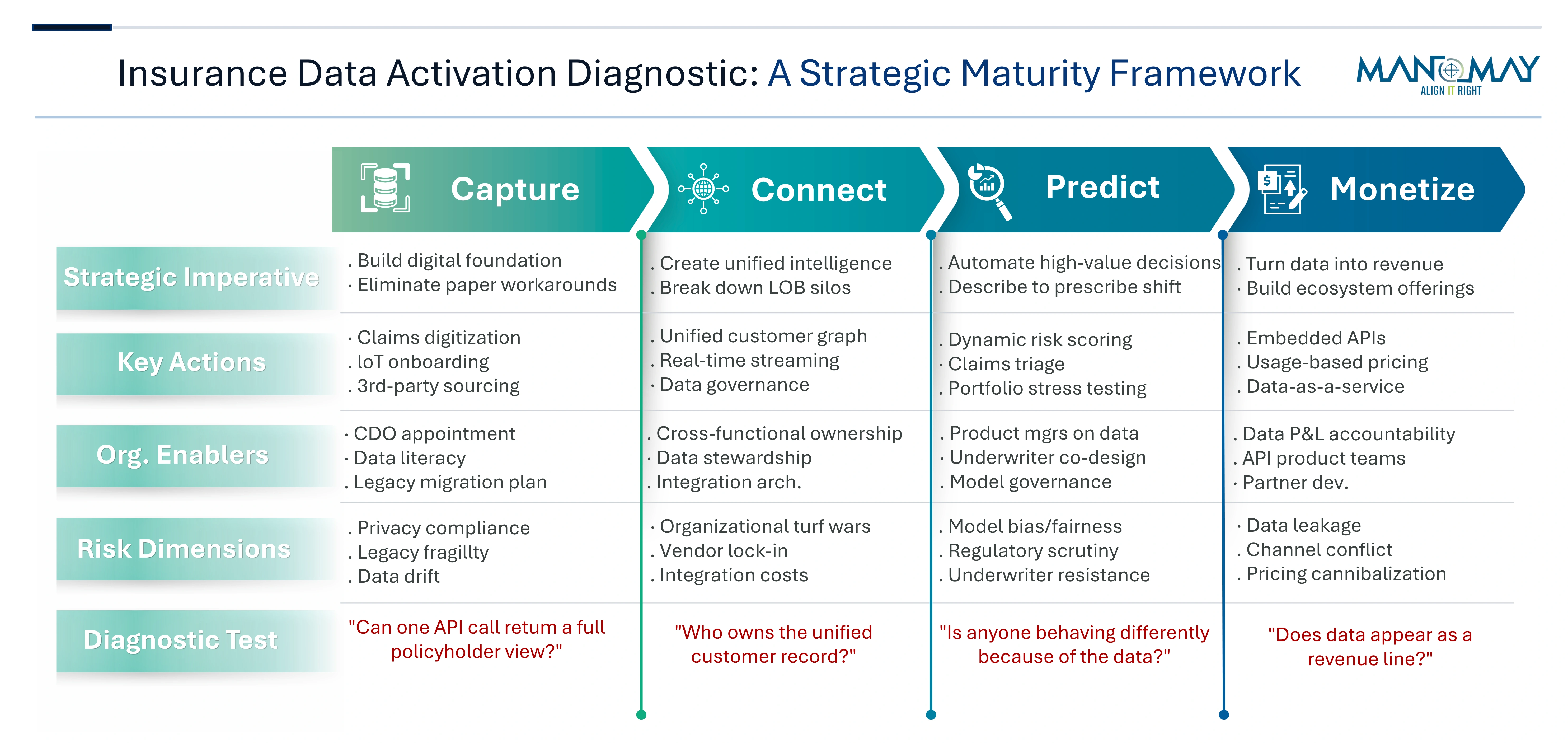

This is where Manomay's Data Activation Diagnostic Framework comes in. It is designed to evaluate how effectively data can be activated across decisions, workflows, and frontline operations.

Stage 2: Connect. Build the graph. Link the policyholder in commercial auto to the same entity in general liability and workers' comp. Merge internal signals with external ones. Weather patterns, court filings, telematics, property imagery. On paper, this reads like a technology challenge. In reality, this is where most ambitious programs go to die. Not because the integration fails, but because organizational turf wars over who owns the unified view grind everything to a halt.

Stage 3: Predict and Scale. Deploy models that change outcomes, not just describe them. Dynamic risk scoring that adjusts mid-policy. Claims triage that routes the right adjuster to the right case before a file is even opened. Propensity models that tell distribution which client to call this week, and, just as critically, which to leave alone. The real difference between stage two and three isn't technical sophistication. It's whether anyone in the organization is behaving differently because of the data.

Stage 4: Monetize. The rarest stage, and the one that demands the biggest mindset shift. This is where data becomes a product, not just a capability. Usage-based pricing that rewards safer behavior in real time. Embedded insurance APIs that let a car manufacturer offer coverage at the point of sale, priced on the insurer's proprietary risk model. Data-as-a-service offerings sold to reinsurers, brokers, or adjacent industries. Few carriers reach this point because it requires thinking about data as a revenue line, not a line item in the IT budget.

The Organizational Iceberg

Frameworks are clean. Execution is messy. And the mess is almost always human, not technical.

The hardest part of data activation isn't building the model - it's getting the underwriter to trust it. For example, at one North American carrier we worked with, an AI-driven pricing tool was introduced that was technically superior to the incumbent process by every measurable standard. Adoption eventually happened after 18 months, but it never delivered the expected outcomes. The underwriters didn't reject the tool because it was wrong. They rejected it because nobody involved them in its design.

This is not an isolated issue - it reflects a broader set of structural barriers that repeatedly surface across insurers:

- Ownership Fragmentation: When data sits at the intersection of actuarial, claims, IT, and distribution, everyone assumes someone else is driving. Shared accountability sounds collaborative on paper. In practice, it means no accountability at all.

- Pilot Purgatory: Most insurers run somewhere between five and fifteen data pilots at any given time. A handful of them actually work. But the ones that do get stuck in a deployment queue because the architecture team is still finishing last year's migration. There's roughly a six-month window between a successful pilot and the organization's enthusiasm closing. Miss that window, and the momentum doesn't just slow - it evaporates.

- Regulatory Asymmetry: Predictive models that improve loss ratios can simultaneously introduce fair-lending or discrimination exposure, sometimes in ways that aren't obvious until a regulator starts asking questions. The carriers that navigate this well don't treat compliance as a gate at the end of the process - they embed regulatory thinking into model design from day one. The ones that don't often find themselves explaining their pricing decisions after the fact, rather than confidently standing behind them.

What The Best Carriers Do Differently

- Start with the decision, not the data. Instead of asking "what can we do with all this information," they identify the three to five highest-value decisions in the business: where to deploy capital, which claims to investigate, which renewals to fight for, and work backwards to the data, models, and workflows required. Everything else is a distraction.

- They fund data like a product, not a project. Projects have end dates. Products have roadmaps, users, and P&L accountability. The best carriers assign product managers to their data capabilities, measure adoption the way a software company would, and iterate based on actual user feedback, not steering committee opinions formed in a conference room.

- They accept imperfection as a feature. This one cuts against the grain in an industry built on precision, but it holds up. A model that's 70 percent accurate and deployed will outperform a 95 percent model stuck in validation. Speed of learning compounds. The insurers that ship early and correct course are steadily pulling ahead of those still waiting for a level of certainty that, in practice, never quite arrives...

- That is ultimately where the real shift happens. From chasing theoretical perfection to building systems that learn, adapt, and improve in the flow of business.

- The reframe is simple. The question for every insurance CEO isn't whether to invest in data - that ship sailed a decade ago. It's about what that investment is actually enabling in practice.

Want to know more? Let's talk about how Manomay's Data Activation Diagnostic Framework can help you assess where you are today - and what it takes to move forward.

Explore our full suite of services - Here

Biz Tech Insights

Editorial Board

Providing strategic clarity in an era of technological acceleration. All perspectives are grounded in rigorous industry analysis.